The New Technology That Accelerates WAN Networks Up to 40 Gbit/s

As cloud technologies evolve, there is a growing need for effective data and server management. An important condition is the simplification of infrastructure maintenance by transferring business-generated data to cloud environments.

The implementation of the new technical requirements entails multiple increases in the amount of data transferred between the cloud environments via WAN links.

The creation and implementation of new technologies for the acceleration of data transfer in WAN-networks are of paramount importance. One of such technologies, developed by Fujitsu, will be discussed in this article.

Today, most often data in WAN networks are transmitted between cloud environments with rates from 1 to 10 Gbit/s. However, for the proper functioning of modern IT solutions, including the Internet of things and artificial intelligence, an even higher data transfer rate is required.

- Existing WAN acceleration technologies increase the effective transmission rate by reducing the amount of data transferred by compression or deduplication.

However, in 10-gigabit WAN networks, the total amount of information already reaches the maximum values. Existing technologies of acceleration in cloud servers can not seriously increase the speed of data transfer, which becomes the “bottleneck” of the entire cloud infrastructure. In order to bring the speed of cloud services to a qualitatively new level, it is necessary either to use processors with a higher clock speed or to apply WAN acceleration technologies that work with other algorithms.

How does it work?

Fujitsu has developed a technology to accelerate WAN-networks, which will provide data transfer in cloud environments (in real time) at speeds up to 40 Gbit/s.

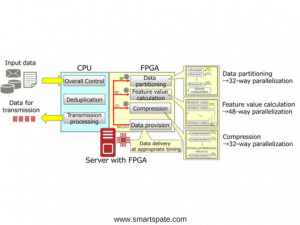

- This speed is achieved with the simultaneous use of several 10-gigabit channels. In this case, the accelerators are the Field-Programmable Gate Array (FPGA) installed on the server. Technology is designed to help in the transfer of large amounts of data between the cloud.

The implementation of the new development became possible due to the allocation of a special computing unit designed for processing compression operations. It provides a highly parallel operation of the main computing nodes, transmitting data at exactly the specified time, based on the predicted completion of each stage of the computation.

- Below are the features of the technology.

1. FPGA matrix parallelization technology uses dedicated computing nodes

Fujitsu has developed a technology for parallelizing FPGA matrices, which significantly reduces the processing time required for their compression and deduplication. The result is achieved by deploying dedicated computing nodes and providing a high degree of parallelization of computations.

2. Optimization of the distribution of data processing flows between the CPU and FPGA matrices

Earlier, for data compression without a loss using duplication, they had to be read twice – before and after determining the duplication of data. As a result, resource consumption increased, and system performance slowed down. The new technology optimizes the use of computational resources by transferring the computational load to FPGA matrices.

Results

Specialists of Fujitsu have estimated the speed of transmission of large amounts of business-generated data. In a test environment, servers with FPGA matrices were connected to each other using 10-gigabit channels. In the test that simulates backup of data, the transfer rate is fixed at 40 Gbit/s.

This is a new industry record. As a result, the speed of the system has increased approximately 30 times compared to a system equipped with only a processor. The new technology will make it possible to create a new generation of cloud services that share large amounts of data from several companies from several geographically dispersed data centers.

Fujitsu plans to implement this technology as an application loaded on servers with FPGA matrices. First of all, it can be used in cloud environments. The company’s laboratory will continue testing the new development. The commercial launch of the technology is scheduled for mid-2018.