How To Develop A Highly Loaded WebSocket Service For Your Apps

How to create a web service that will interact with users in real time, while maintaining several hundred thousand connections at the same time?

Hello everyone, my name is Paul Brook, and I’m a developer. Recently I came across such a problem – to create an interactive service where the user can get quick bonuses for their actions. The matter was complicated by the fact that the project had rather high demands on the load, and the deadlines were extremely low.

In this article, I will describe how I chose the solution for implementing a WebSocket server for the complex requirements of the project, what problems I encountered in the development process, and also I would like to say a few words about how the configuration of the Linux kernel can help in achieving the above goals.

At the end of the article, useful links to development, testing, and monitoring tools.

Tasks and requirements

Requirements for the function of the project:

- To make it possible to track the presence of the user on the resource and track the viewing time;

- To provide a fast exchange of messages between the client and the server, since the time for receiving the bonus by the user is strictly limited;

- Create a dynamic interactive interface with synchronization of all actions when the user interacts with the service through several tabs or devices at the same time.

Load Requirements:

- The application must withstand at least 150,000 online users.

The term of realization – 1 month.

Choice of technology

Comparing the tasks and requirements of the project, I came to the conclusion that it is best to use WebSocket technology to develop it. It provides a permanent connection to the server, eliminating overhead for a new connection for each message that is present in the implementation of Ajax and long-polling technologies. This allows obtaining the necessary high speed of messaging in combination with adequate consumption of resources, which is very important at high loads.

- Also, due to the fact that installation and disconnection of the connection are two distinct events, it is possible to accurately track the time of the user’s presence on the site.

Given the rather limited project timeframe, I decided to develop using the WebSocket framework. I learned several options, the most interesting of which seemed to me PHP ReactPHP, PHP Ratchet, Node.JS web sockets/ws, PHP Swoole, PHP Workerman, Go Gorilla, Elixir Phoenix. Their capacity in terms of load tested on a laptop with an Intel Core i5 processor and 4 GB of RAM (such resources were enough for research).

PHP Workerman is an asynchronous event-oriented framework. Its capabilities are exhausted by the simplest implementation of the WebSocket server and the ability to work with the libevent library needed to handle asynchronous event notifications. The code is at the level of PHP 5.3 and does not meet any standards. For me, the main disadvantage was that the framework does not allow the implementation of high-load projects. On the test stand, the developed Hello World application could not hold thousands of connections.

ReactPHP and Ratchet are comparable in their capabilities to Workerman. Ratchet inside depends on ReactPHP, it also works through libevent and does not allow creating a solution for high loads.

Swoole – an interesting framework written in C, connects as an extension for PHP, has tools for parallel programming. Unfortunately, I found that the framework is not stable enough: on a test bench, it cut off every second connection.

Next, I looked at Node.JS WS. This framework showed quite good results – about 5 thousand connections on the test bench without additional settings. However, my project implied significantly higher loads, so I chose the Go Gorilla + Echo Framework and Elixir Phoenix frameworks. These options have been tested in more detail.

Stress Testing

For testing using tools such as artillery, Gatling and service flood.io.

The purpose of the testing was to study the consumption of CPU and memory resources. The characteristics of the machine were the same – the processor Intel iCore 5 and 4 GB of RAM. Tests were conducted using the example of the simplest chats on Go and Phoenix.

Here is a simple chat application that normally functioned on a machine of the specified capacity with a load of 25-30 thousand users:

config: target: "ws://127.0.0.1:8080/ws" phases - duration:6 arrivalCount: 10000 ws: rejectUnauthorized: false scenarios: - engine: “ws” flow - send “hello” - think 2 - send “world”

Class LoadSimulation extends Simulation {

val users = Integer.getInteger (“threads”, 30000)

val rampup = java.lang.Long.getLong (“rampup”, 30L)

val duration = java.lang.Long.getLong (“duration”, 1200L)

val httpConf = http

.wsBaseURL(“ws://8.8.8.8/socket”)

val scn = scenario(“WebSocket”)

.exes(ws(“Connect WS”).open(“/websocket?vsn=2.0.0”))

.exes(

ws(“Auth”)

sendText(“““[“1”, “1”, “my:channel”, “php_join”, {}]”””)

)

.forever() {

exes(

ws(“Heartbeat”).sendText(“““[null, “2”, “phoenix”, “heartbeat”, {}]”””)

)

.pause(30)

}

.exes(ws(“Close WS”).close)

setUp(scn.inject(rampUsers(users) over (rampup seconds)))

.maxDuration(duration)

.protocols(httpConf)

Test launches have shown that everything runs smoothly on a machine of the specified capacity with a load of 25-30 thousand users.

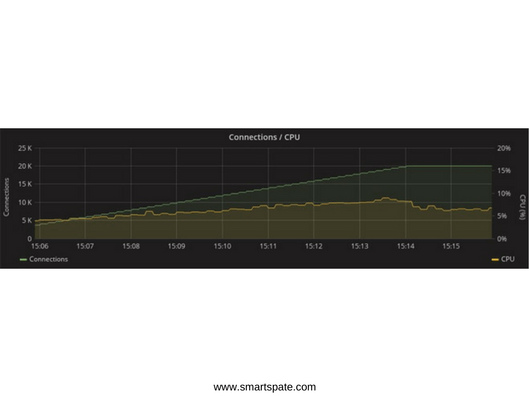

CPU Consumption:

Phoenix

Gorilla

The consumption of RAM at a load of 20 thousand connections reached 2 GB in the case of both frameworks:

Phoenix

Gorilla

Go even outperforms Elixir in performance, but the Phoenix Framework provides much more features. On the graph below, which shows the consumption of network resources, you can see that in the Phoenix test, 1.5 times more messages are transmitted.

This is due to the fact that this framework already has a mechanism of heartbeats (periodic synchronizing signals) in the initial “boxed” version, which Gorilla will have to implement independently. In the conditions of limited time, any additional work was a weighty argument in favor of Phoenix.

Phoenix

Gorilla

About Phoenix Framework

Phoenix is a classic MVC framework, quite similar to Rails, which is not surprising, as one of its developers and creator of the Elixir language is Jose Valim, one of the main creators of Ruby on Rails. Some similarities can be seen even in the syntax.

Phoenix:

defmodule Benchmarker.Router do use Phoenix.Router alias Benchmarker.Controllers get "/:title", Controllers.Pages, :index, as: :page end

Rails:

Benchmarker::Application.routes.draw do root to: "pages#index" get "/:title", to: "pages#index", as: :page end

Mix – automation utility for Elixir projects

When using Phoenix and the Elixir language, a significant number of processes are performed using the Mix utility. This is a build tool, which solves many different tasks for creating, compiling and testing an application, managing its dependencies, and some other processes.

The mix is a key part of any Elixir project. This utility is in no way inferior and does not exceed analogs from other languages, but does its job perfectly well. And because the Elixir code runs on the Erlang virtual machine, it becomes possible to add any libraries from the world of Erlang to dependencies. In addition, along with Erlang VM, you get convenient and secure concurrency, as well as high fault tolerance.

Problems and solutions

With all the virtues of the Phoenix, there are its drawbacks. One of them is the difficulty of solving such a task as monitoring active users on the site in conditions of high load.

- The fact is that users can connect to different nodes of the application, and each node will know only about their own clients. To list the active users, you will have to poll all application nodes.

To solve these problems in Phoenix there is a Presence module that gives the developer the ability to track active users in just three lines of code. It uses the Hart bit mechanism and non-conflicting replication within the cluster and the PubSub server for message exchange between nodes.

It sounds good, but in fact, it turns out roughly the following. Hundreds of thousands of connecting and disconnecting users generate millions of messages for synchronization between nodes, which means that CPU consumption goes over all the allowed limits, and even the Redis PubSub connection does not save the situation. The list of users is duplicated on each node, and calculating the diff with each new connection becomes more expensive and more expensive – and this is because the calculation is performed on each of the active nodes.

In this situation, the mark of even 100,000 customers becomes unattainable. I could not find other ready solutions for this task, so I decided to do the following: assign a duty to monitor the presence of online users on the database.

At first glance, this is a good idea, in which there is nothing difficult: it is enough to store the last activity field in the database and periodically update it. Unfortunately, for projects with a high load, this is not an option: when the number of users reaches several hundred thousand, the system will not cope with the millions coming from them hart bits.

I chose a less trivial, but more productive solution. When a user is connected, a unique row is created for the user in the table, which stores its ID, the exact time of entry, and the list of nodes to which it is connected. The list of nodes is stored in the JSONB field, and if there is a conflict between the rows, it’s enough to update it.

create table watching_times ( id serial not null constraint watching_times_pkey primary key, user_id integer, join_at timestamp, terminate_at timestamp, nodes jsonb ); create unique index watching_times_not_null_uni_idx on watching_times (user_id, terminate_at) where (terminate_at IS NOT NULL); create unique index watching_times_null_uni_idx on watching_times (user_id) where (terminate_at IS NULL);

Here such request is responsible for user input:

INSERT INTO watching_times (

user_id,

join_at,

terminate_at,

nodes

)

VALUES (1, NOW(), NULL, '{nl@192.168.1.101”: 1}')

ON CONFLICT (user_id)

WHERE terminate_at IS NULL

DO UPDATE SET nodes = watching_times.nodes ||

CONCAT(

'{nl@192.168.1.101:',

COALESCE(watching_times.nodes->>'nl@192.168.1.101', '0')::int + 1,

'}'

)::JSONB

RETURNING id;

The list of nodes looks like this:

If a user opens a service in a second window or on another device, he can go to another node, and then it will also be added to the list. If it hits the same node as in the first window, the number opposite the name of that node in the list will increase. This number reflects the number of active user connections to a specific node.

Here is what the request looks like, which goes to the database when the session is closed:

UPDATE watching_times

SET nodes

CASE WHEN

(

CONCAT(

'{“nl@192.168.1.101”: ',

COALESCE(watching_times.nodes ->> 'nl@192.168.1.101', '0') :: INT - 1,

'}'

)::JSONB ->>'nl@192.168.1.101'

)::INT <= 0

THEN

(watching_times.nodes - 'nl@192.168.1.101')

ELSE

CONCAT(

'{“nl@192.168.1.101”: ',

COALESCE(watching_times.nodes ->> 'nl@192.168.1.101', '0') :: INT - 1,

'}'

)::JSONB

END

),

terminate_at = (CASE WHEN ... = '{}' :: JSONB THEN NOW() ELSE NULL END)

WHERE id = 1;

List of nodes:

When the session is closed on a certain node, the connection counter in the database is reduced by one, and when the node reaches zero, the node is removed from the list. When the list of nodes is completely empty, this moment will be fixed as the final time for the user to exit.

- This approach has made it possible not only to monitor the presence of the user online and the viewing time but also to filter these sessions according to various criteria.

In all this there is only one drawback: if the node fails, all its users “hang” online. To solve this problem, we have a daemon that periodically cleans the database from such records, but until now this was not required. Analysis of the load and monitoring of the cluster after the release of the project in production showed that there were no downs of the nodes and this mechanism was not used.

There were other difficulties, but they are more specific, so it’s worth turning to the issue of fault tolerance of the application.

Configuring the Linux kernel to improve performance

Write a good application in a productive language – this is only half the case, without literate DevOps to achieve at least some high results is impossible.

The first barrier to the target load was the Linux network kernel. It was necessary to make some adjustments to achieve more rational use of its resources.

Each open socket is a file descriptor in Linux, and their number is limited. The reason for the limit is that for each open file in the kernel, a C structure is created that takes up unreclaimable kernel memory.

To use memory to the maximum, I set very high values of the sizes of the receive and transmit buffers, and also increased the size of the TCP buffers of the sockets. The values here are set not in bytes, but in memory pages, usually one page is 4 KB, and the maximum number of open sockets waiting for connection for high-loaded servers, I set the value to 15 thousand.

Limits of file descriptors:

#! / usr / bin / env bash sysctl -w 'fs.nr_open = 10000000' # Maximum number of open file descriptors sysctl -w 'net.core.rmem_max = 12582912' # The maximum size of the receive buffers of all types sysctl -w 'net.core.wmem_max = 12582912' # The maximum size of the transmit buffers of all types sysctl -w 'net.ipv4.tcp_mem = 10240 87380 12582912' # TCP socket memory size sysctl -w 'net.ipv4.tcp_rmem = 10240 87380 12582912' # receive buffer size sysctl -w 'net.ipv4.tcp_wmem = 10240 87380 12582912' # send buffer size <code> sysctl -w 'net.core.somaxconn = 15000' # Maximum number of open sockets waiting for connection

If you are using nginx in front of a cowboy server, you should also consider increasing its limits. The worker_connections and worker_rlimit_nofile directives are responsible for this.

The second obstacle is not so obvious. If you run a similar application in a distributed mode, you can notice a sharp increase in CPU resource consumption with an increase in the number of connections. The problem is that Erlang works by default with Poll system calls. In version 2.6 of the Linux kernel, there is an Epoll that can provide a more efficient mechanism for applications that process a large number of concurrent connections-with O (1) complexity, unlike Poll, which has O (n) complexity.

Fortunately, the Epoll mode is enabled by one flag: + K true, I also recommend increasing the maximum number of processes generated by your application and the maximum number of open ports using the + P and + Q flags, respectively.

Poll vs. Epol

#!/usr/bin/env bash

Elixir --name ${MIX_NODE_NAME}@${MIX_HOST} --erl “-config sys.config -setcookie ${ERL_MAGIC_COOKIE} +K true +Q 500000 +P 4194304” -S mix phx.server

The third problem is more individual, and not everyone can face it. On this project, the process of automatic de-modeling and dynamic scaling was organized with the help of the Chef and Kubernetes. Kubernetes allows you to quickly deploy Docker-containers on a large number of hosts, and it’s very convenient, but you can not know the ip-address of a new host in advance, and if you do not register it in the Erlang config, you will not be able to connect a new node to the distributed application.

Fortunately, there is a libcluster library to solve these problems. Communicating with Kubernetes on the API, it real-time learns about the creation of new nodes and registers them in the Erlang cluster.

config :libcluster, topologies: [ k8s: [ strategy: Cluster.Strategy.Kubernetes, config: [ kubernetes_selector: “app=my -backend”, kubernetes_node_basename: “my -backend”]]]

Results and prospects

The chosen framework, combined with the correct configuration of the servers, made it possible to achieve all the goals of the project: in the set timeframe (1 month), develop an interactive web service that communicates with users in real time and withstands loads from 150,000 connections and above.

After the launch of the project in production, monitoring was conducted, which showed the following results: with a maximum number of connections up to 800,000, the CPU consumption is 45%. The average load is 29% with 600 thousand connections.

In this graph – the memory consumption when working in a cluster of 10 machines, each of which has 8 GB of RAM.

As for the basic working tools in this project, Elixir and Phoenix Framework, I have every reason to believe that in the coming years they will become as popular as Ruby and Rails in their time, so it makes sense to start mastering them right now.

References:

Development:

elixir-lang.org

phoenixframework.org

Stress Testing:

gatling.io

flood.io

Monitoring:

prometheus.io

grafana.com